Time to write a little bit about this little project of mine.

tl;dr

Multithreading more responsive in a Python 2.7. 30% more requests per second. Satisfaction guaranteed!

Introduction

After leaving my old job at CCP Games last year, I had the urge to try to collect some of the stuff that we had done for Python 2.7 over there and make it available to the world. So I started this little fork off 2.7.

The idea was to have a place to add “improvements” to vanilla (as opposed to Stackless) 2.7, so that they could be kept separately and in sync with CPython 2.7.

Thus far, what I’ve been mostly focusing on is modernizing thread support. (for a full list of changes, see the whatsnew file).

When we were working on DUST 514 for the Playstation I had to make certain improvements to make networking work more efficiently on that platform. We were interfacing stackless python with the native http api of the PS3, and had to use blocking worker threads. Marshaling from those threads to tasklets was causing unnecessary latency.

We ended up doing a lot of experiments with condition variables, in the end, providing native C implementations to minimize GIL thrashing and reducing wakeup latency to the minimum.

In PythonPlus I have done this and some other stuff in order to improve threading performance.

The threading related changes cover among other things:

- Adding timeout parameters to blocking calls as in the 3.x api.

- Adding a native threading.Condition object

- Improving the GIL

Adding a native Condition object aims to reduce the thread thrashing that is otherwise associated with condition variables, since a lot lof locking and context switching needs to happen for a thread to wake up with the normal .py version of those constructs. To do this, however, the internal non-recursive locks need to be implemented using a lock and a condition variable themselves, rather than using native semaphore objects.

Changing the lock types used required the GIL to be visited, since the behaviour of the old GIL was just a random side effect of the choice of internal locks. This also allowed me to address the old Beazley problem while at it.

The GIL change is minor. It is simply a separate function, and when a CPU bound thread wishes to yield the GIL to another thread, it calls a new api function, _PyThread_yield_GIL(). Threads that are trying to re-aquire the GIL after unlocking them, are considered to be IO threads and have priority for the GIL when a CPU thread yields it. But if no such thread is present, then the GIL won’t actually be yielded 99 out of every 100 yields. This minimizes unnecessary thrashing among CPU threads, while allowing IO threads to quickly get their foot in when required.

Performance

I quickly got this all up and running, but then I had to prove it to be actually better than regular 2.7. To do this, I set up two test scenarios:

- Tools/plus/giltest.py – a test platform to measure performance of concurrent cpu threads as well as the performance of pairs of producer/consumer threads synchronized either with threading.Condition or threading.Lock

- Tools/plus/testserver.py – a multithreaded webserver using a pool of thread and socketserver.py, being exercised by ab.

On windows, I found it easy to see improvements. I got the GIL to behave better and I got the web server to increase throughput. producer/consumer pairs using Condition variables got a real performance boost and those IO threads got a priority boost over regular CPU bound threads as expected.

However, my virtual linux box was more disappointing. Tests showed that just replacing the native non-recursive lock which was based on the posix sem_t object with a construct using pthread_mutex_t and pthread_cond_t, slowed down execution.

Fixing linux

I decided that there ought ot be no good reason for a pthread_cond_t to be so much slower than a sem_t, so I decided to write my own condition object using a sem_t. To make a long story short, it worked. My emulated condition variable (written using a pthread_mutex_t and a sem_t) is faster than a pthread_condition_t. At least on my dual core virtual box. Go figure.

The making of this new condition variable is a topic for a blog post on its own. I doggedly refused to look up other implementations of condition variables based on semaphores, and wanted to come up with a solution on my own that did not violate the more subtle promises that the protocol makes. Along the way, I was guided by failing unittests of the threading.Barrier class, which relies on the underlying threading.Condition to be true to its promise. I was actually stuck on this problem for a few months, but after a recent eureka moment I think I succeeded.

The results

So, this has been some months in the making. I set up the header files so that various aspects of my patch could be switched on or off, and a macro especially for performance testing then sets these in a sensible way.

giltest.py

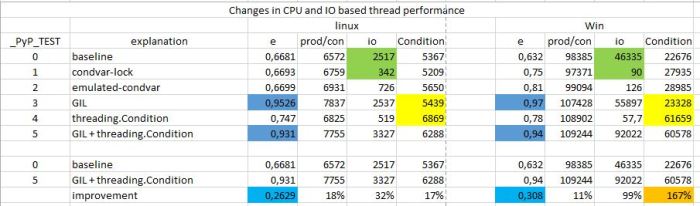

First, the results of the giltest.py file, with various settings of the macro and on windows and linux:

Some notes on this are in order.

- e is “efficiency”, the cpu throughput of two concurrent cpu threads (incrementing a variable) compared to just one thread.

- prod/con is a pair of producer/consumer threads using a threading.Lock primitive, and the column shows the number of transactions in a time-frame (one second)

- The green bit shows why a GIL improvement was necessary since IO threads just couldn’t get any priority over a cpu thread. This column is showing prod/con transactions in the presence of a cpu thread.

- In the end, the improvements on linux are modest. Maybe it is because of my virtual machine. But the Beazley problem is fixed, and IO responsiveness picks up. On windows it is more pronounced.

- The final column is a pair of producer/consumer threads synchronized using a threading.Condition object. Notice on windows how performance picks up almost threefold, ending up being some 60% of a pair that’s synchronized with a threading.Lock.

testserver.py

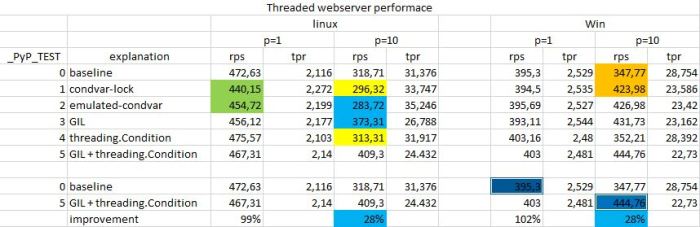

Now for more real-world like results. Here the aim was to show that running many requests in parallel was better handled using the new system. Again, improvements on linux are harder to gauge. In fact, my initial attempts were so disappointing on linux that I almost scrapped the project. But when I thought to rewrite the condition variable, things changed.

- Notice how performance picks up with “emulated condvar” on linux (green boxes) (on windows, it is always emulated)

- p=1 and p=10 are the number of parallel requests that are made. “ab” is single threaded, it shoots off n requests and then waits for them all to finish before doing the next batch, so this is perhaps not completely real-world.

- On linux, rps (requests per second) go up for the multi-threaded case, both when we add the new GIL (better IO responsiveness) and when we add the native threading.Condition. Combined, it improves 30%.

- On windows, we see the same, except that the biggest improvement is when we modify the locks (orange boxes).

- On windows, we achieve better throughput with multithreading. I.e. multiple requests now work better than single requests, whereas on linux, multiple requests performed worse.

Conclusion

These tests were performed on a dual core laptop, running windows 7. The linux tests were done in a virtual ubuntu machine on the same laptop, using two cpus. I’m sure that the virtual nature has its effect on the results, and so, caveat emptor.

Overall, we get 30% improvement in responsiveness when there are multiple threads doing requests using this new threading code in Python Plus. For real world applications serving web pages, that ought to matter.

On windows, the native implementation of threading.Condition provides a staggering 167% boost in performance of two threads doing rendezvous using a condition variable.

While optimizing the linux case, I uncovered how pthread_cond_t is curiously inefficient. A “greedy” implementation of a condition variable using the posix sem_t showed dramatic improvement on my virtual machine. I haven’t replicated this on native linux, but I suspect that the implementors of the pthread library are using explicit scheduling, whereas we rely on the presumably greedy scheduling semantics of the semaphore primitive. But perhaps a separate blog post on this is in order, after some more research.

Fun stuff.