When doing IO, it is sometimes useful for a worker thread to notify Python that something has happened. Previously we have just had the Python main thread “Poll” some external variable for that, but recently we have been experimenting with having the main thread just grab the GIL and perform python work itself.

This should be straightforward. Python has an api called PyGILState_Ensure() that can be called on any thread. If that thread doesn’t already have a Python thread state, it will create a temporary one. Such a thread is sometimes called an external thread.

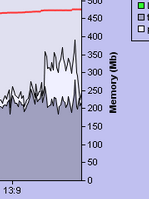

On a server loaded to some 40% with IO, this is what happened when I turned on this feature:

The dark gray area is main thread CPU, (initially at around 40%) and the rest is other threads. Turning on the “ThreadWakeup” feature adds some 20% extra cpu work to the process.

When the main thread is not working, it is idle doing a MsgWaitForMultipleObjects() Windows system call (with the GIL unclaimed). So the worker thread should have no problem acquiring the GIL. Further, there is only ever one woker thread doing a PyGILState_Ensure()/PyGILState_Release() at the same time, and this is ensured using locking on the worker thread side.

Further tests seem to confirm that if the worker thread already owns a Python thread state, and uses that to aquire the GIL (using a PyEval_RestoreThread() call) this overhead goes away.

This was surprising to me, but it seems to indicate that it is very expensive to “acquire a thread state on demand” to claim the GIL. This is very unfortunate, because it means that one cannot easily use arbitrary system threads to call into Python without significant overhead. These might be threads from the Windows thread pool for example, threads that we have no control over and therefore cannot assign thread state to.

I will try to investigate this furter, to see where the overhead is coming from. It could be the extra TLS calls made, or simply the cost of malloc()/free() involved. Depending on the results, there are a few options:

- Keep a single thread state on the side for (the single) external thread that can claim the GIL at a time, ready and initialized.

- Allow an external thread to ‘borrow’ another thread state and not use its own.

- Streamline the stuff already present.

Update, oct. 6th 2011:

Enabling dynamic GIL with tread state caching did notthing to solve this issue.

I think the problem is likely to be that spin locking is in effect for the GIL. I’ll see what happens if I explicitly define the GIL to not use spin locking.

You’re on Windoze dude! WTF is wrong with you? You’re supposed to be using C# or something M$ makes $ off of.

Yeah, the temporary thread state stuff is a bit ugly. Thread states may only have one public field, but they have quite a bit of internal stuff. That said, the only additional things that really happen relative to keeping a thread state around are:

1. The malloc/free of the thread state

2. The TLS update for the thread state pointer

3. The mutex acquisition/release for the adjustments to the thread list in the interpreter state

4. Modifying the linked list of threads to add/remove the thread state (even removal should be quick, since the temporary thread state should still be at the head of the queue)

The approach of doing a single GILState_Ensure early on and then subsequently doing Py_BEGIN_ALLOW_THREADS and Py_END_ALLOW_THREADS (or the equivalent), thus keeping the thread state around is always going to be quicker though – the above steps will then never happen during normal runtime.

I’m admittedly surprised the overhead is *that* high, though. I’ll be interested in your findings if you manage to narrow down the culprit any further.

Indeed. I was surprised myself. One thing missing from this is stats on how often this is happening per second. I’ll post more info when I have it.